This week on the internet

Explore Mamba AI architecture, quantum-black hole links, BookCorpus secrets, Akkoma's decentralized social platform, ancient focus techniques and finding your blindspots in our latest newsletter!

We heard from many of you that last week’s newsletter formatting was terrible. Bear with us, as we are getting better at editing on Substack. When that out of the way, lets look at what we found this week!

Mamba Explained

Move over Transformers, there is a new AI architecture in town. Its called Mamba and it promises similar performance to Transformers but with faster inference and better scaling for long sequences. Transformers suffer from a quadratic bottleneck in attention computations, leading to high memory usage and slow inference. Mamba addresses this by using a State Space Model (SSM) as an alternative to attention, which allows for efficient computation and small memory footprint. The SSM formulation can be interpreted as a type of recurrent neural network, but with dynamic forgetting and remembering mechanisms. Mamba aims to strike a better balance between the efficiency of traditional RNNs and the effectiveness of Transformers, by selectively compressing the model state.

Link between quantum mechanics, black holes and computing

Physicist Brian Cox explains the engineering challenges of building quantum computers and the delicate nature of storing information in their memory. However, black holes have an unexpected connection to quantum information storage, with concepts like Planck units, holography, and redundancy potentially shaping future computing. Cox acknowledges the mind-bending complexity of this frontier where the laws of nature and technological innovation intersect.

Dirty secrets of a key dataset behind many famous ML models

BookCorpus is a widely used dataset in machine learning that has helped train numerous influential language models. However, the researchers found several concerning issues with the dataset. First, it only contains a 2% sample of books from the Smashwords website, and many of the books are duplicates, leaving only 7,185 unique books. Second, the dataset appears to violate copyright restrictions for hundreds of books. Third, the dataset is skewed towards certain genres like romance and vampires, which could lead to biases in language models trained on it. Fourth, the dataset may also have skewed representation of different religions. Finally, the contributions to the dataset are highly lopsided, with a small number of authors contributing the majority of the content.

akkoma.social

Akkoma is a new kind of facebook. Its a decentralized social media platform that is part of the "fediverse", a network of independent servers running different social media software. It implements the ActivityPub protocol, allowing users to interact with people on other fediverse services. Akkoma differentiates itself with a focus on custom expression, like emoji reactions and rich markup. It is consistently faster than the Pleroma software it is forked from. Akkoma is easy to sign up for and use, with features like unlimited attachments, post editing, and polls.

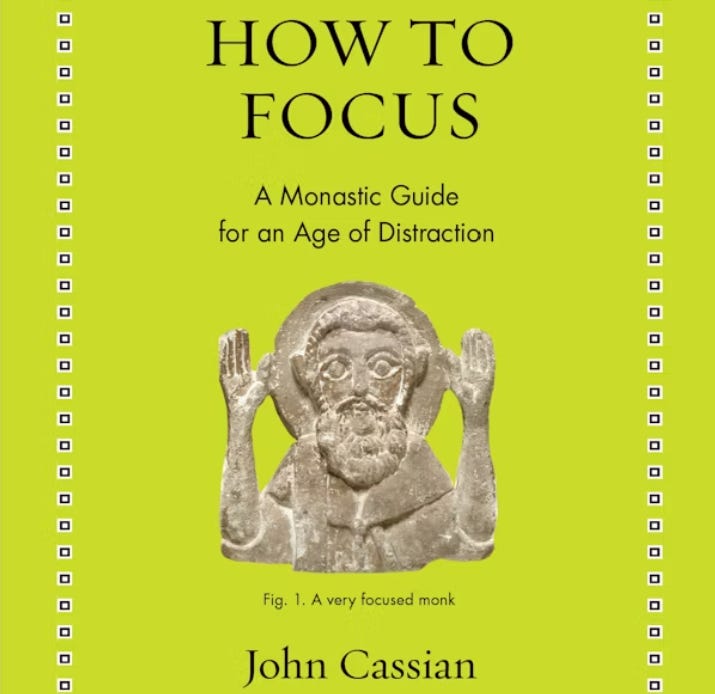

How to focus

How to Focus is a collection of insights and advice on improving attention and concentration, drawn from the writings of the 5th century Christian monk John Cassian. Cassian and his companion Germanus traveled to Egypt and interviewed sage monks, who offered techniques such as setting goals, training the body, managing memory, using mantras, and consulting others. The monks acknowledged that distraction is an age-old problem, but argued that it can be confronted and overcome through disciplined practices. The book features a new translation by Jamie Kreiner, presenting the original Latin text alongside the English. The strategies outlined can help even non-monastics train their focus on what is most important.